diff --git a/examples/README.md b/examples/README.md

index ee8b1bc..0438cb0 100644

--- a/examples/README.md

+++ b/examples/README.md

@@ -23,24 +23,6 @@ height = int(video_stream['height'])

)

```

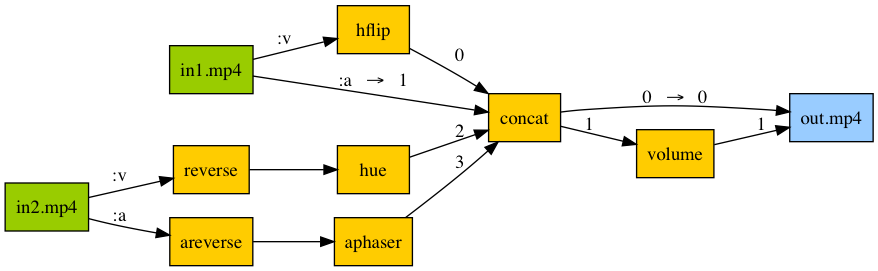

-## Process audio and video simultaneously

-

- -

-```python

-in1 = ffmpeg.input('in1.mp4')

-in2 = ffmpeg.input('in2.mp4')

-v1 = in1['v'].hflip()

-a1 = in1['a']

-v2 = in2['v'].filter_('reverse').filter_('hue', s=0)

-a2 = in2['a'].filter_('areverse').filter_('aphaser')

-joined = ffmpeg.concat(v1, a1, v2, a2, v=1, a=1).node

-v3 = joined[0]

-a3 = joined[1].filter_('volume', 0.8)

-out = ffmpeg.output(v3, a3, 'out.mp4')

-out.run()

-```

-

## [Convert video to numpy array](https://github.com/kkroening/ffmpeg-python/blob/master/examples/ffmpeg-numpy.ipynb)

-

-```python

-in1 = ffmpeg.input('in1.mp4')

-in2 = ffmpeg.input('in2.mp4')

-v1 = in1['v'].hflip()

-a1 = in1['a']

-v2 = in2['v'].filter_('reverse').filter_('hue', s=0)

-a2 = in2['a'].filter_('areverse').filter_('aphaser')

-joined = ffmpeg.concat(v1, a1, v2, a2, v=1, a=1).node

-v3 = joined[0]

-a3 = joined[1].filter_('volume', 0.8)

-out = ffmpeg.output(v3, a3, 'out.mp4')

-out.run()

-```

-

## [Convert video to numpy array](https://github.com/kkroening/ffmpeg-python/blob/master/examples/ffmpeg-numpy.ipynb)

@@ -86,6 +68,24 @@ out, _ = (ffmpeg

)

```

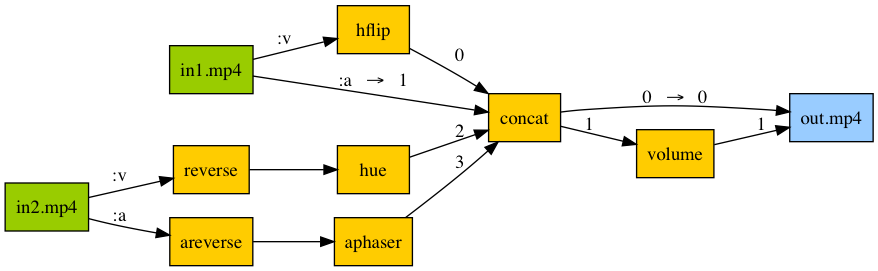

+## Audio/video pipeline

+

+

@@ -86,6 +68,24 @@ out, _ = (ffmpeg

)

```

+## Audio/video pipeline

+

+ +

+```python

+in1 = ffmpeg.input('in1.mp4')

+in2 = ffmpeg.input('in2.mp4')

+v1 = in1['v'].hflip()

+a1 = in1['a']

+v2 = in2['v'].filter_('reverse').filter_('hue', s=0)

+a2 = in2['a'].filter_('areverse').filter_('aphaser')

+joined = ffmpeg.concat(v1, a1, v2, a2, v=1, a=1).node

+v3 = joined[0]

+a3 = joined[1].filter_('volume', 0.8)

+out = ffmpeg.output(v3, a3, 'out.mp4')

+out.run()

+```

+

## [Jupyter Frame Viewer](https://github.com/kkroening/ffmpeg-python/blob/master/examples/ffmpeg-numpy.ipynb)

+

+```python

+in1 = ffmpeg.input('in1.mp4')

+in2 = ffmpeg.input('in2.mp4')

+v1 = in1['v'].hflip()

+a1 = in1['a']

+v2 = in2['v'].filter_('reverse').filter_('hue', s=0)

+a2 = in2['a'].filter_('areverse').filter_('aphaser')

+joined = ffmpeg.concat(v1, a1, v2, a2, v=1, a=1).node

+v3 = joined[0]

+a3 = joined[1].filter_('volume', 0.8)

+out = ffmpeg.output(v3, a3, 'out.mp4')

+out.run()

+```

+

## [Jupyter Frame Viewer](https://github.com/kkroening/ffmpeg-python/blob/master/examples/ffmpeg-numpy.ipynb)

-

-```python

-in1 = ffmpeg.input('in1.mp4')

-in2 = ffmpeg.input('in2.mp4')

-v1 = in1['v'].hflip()

-a1 = in1['a']

-v2 = in2['v'].filter_('reverse').filter_('hue', s=0)

-a2 = in2['a'].filter_('areverse').filter_('aphaser')

-joined = ffmpeg.concat(v1, a1, v2, a2, v=1, a=1).node

-v3 = joined[0]

-a3 = joined[1].filter_('volume', 0.8)

-out = ffmpeg.output(v3, a3, 'out.mp4')

-out.run()

-```

-

## [Convert video to numpy array](https://github.com/kkroening/ffmpeg-python/blob/master/examples/ffmpeg-numpy.ipynb)

-

-```python

-in1 = ffmpeg.input('in1.mp4')

-in2 = ffmpeg.input('in2.mp4')

-v1 = in1['v'].hflip()

-a1 = in1['a']

-v2 = in2['v'].filter_('reverse').filter_('hue', s=0)

-a2 = in2['a'].filter_('areverse').filter_('aphaser')

-joined = ffmpeg.concat(v1, a1, v2, a2, v=1, a=1).node

-v3 = joined[0]

-a3 = joined[1].filter_('volume', 0.8)

-out = ffmpeg.output(v3, a3, 'out.mp4')

-out.run()

-```

-

## [Convert video to numpy array](https://github.com/kkroening/ffmpeg-python/blob/master/examples/ffmpeg-numpy.ipynb)

@@ -86,6 +68,24 @@ out, _ = (ffmpeg

)

```

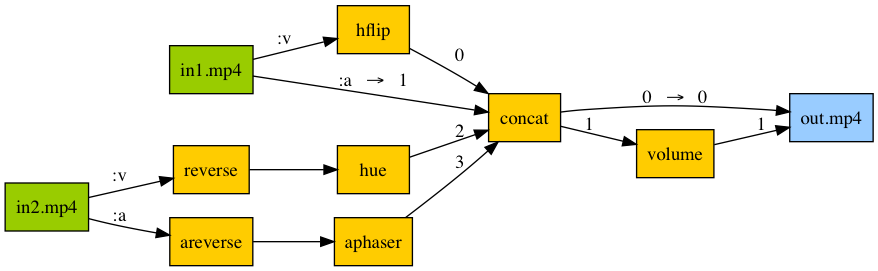

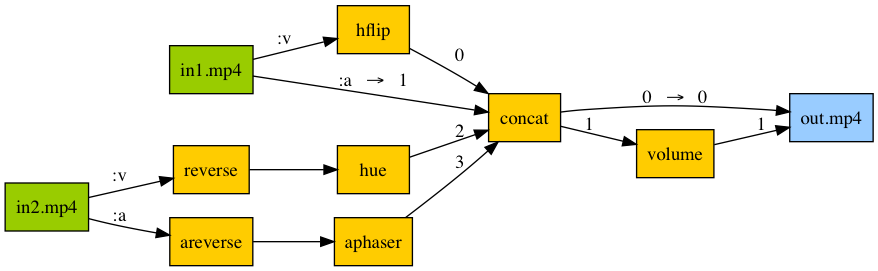

+## Audio/video pipeline

+

+

@@ -86,6 +68,24 @@ out, _ = (ffmpeg

)

```

+## Audio/video pipeline

+

+ +

+```python

+in1 = ffmpeg.input('in1.mp4')

+in2 = ffmpeg.input('in2.mp4')

+v1 = in1['v'].hflip()

+a1 = in1['a']

+v2 = in2['v'].filter_('reverse').filter_('hue', s=0)

+a2 = in2['a'].filter_('areverse').filter_('aphaser')

+joined = ffmpeg.concat(v1, a1, v2, a2, v=1, a=1).node

+v3 = joined[0]

+a3 = joined[1].filter_('volume', 0.8)

+out = ffmpeg.output(v3, a3, 'out.mp4')

+out.run()

+```

+

## [Jupyter Frame Viewer](https://github.com/kkroening/ffmpeg-python/blob/master/examples/ffmpeg-numpy.ipynb)

+

+```python

+in1 = ffmpeg.input('in1.mp4')

+in2 = ffmpeg.input('in2.mp4')

+v1 = in1['v'].hflip()

+a1 = in1['a']

+v2 = in2['v'].filter_('reverse').filter_('hue', s=0)

+a2 = in2['a'].filter_('areverse').filter_('aphaser')

+joined = ffmpeg.concat(v1, a1, v2, a2, v=1, a=1).node

+v3 = joined[0]

+a3 = joined[1].filter_('volume', 0.8)

+out = ffmpeg.output(v3, a3, 'out.mp4')

+out.run()

+```

+

## [Jupyter Frame Viewer](https://github.com/kkroening/ffmpeg-python/blob/master/examples/ffmpeg-numpy.ipynb)